Crafting antiOOP: A 13-Step Journey into Functional Language (Part 2)

Hey guys in this post we will focus on setting up the development environment and building the lexer for antiOOP.

Welcome back guys, part 2 for antiOOP. If you are new check out the previous blog.

For source code CLICK HERE

We will jump right into the development now. Things are getting serioussss……

Setting up the Development Environment

In this post, we’ll set up our development environment and create the initial structure for our antiOOP. We’ll use Python 3.8+ for this one, so make sure you have it installed bro/sis.

Project Structure

Create the following directory structure for our project:

antioop/

├── src/

│ ├── lexer.py

│ ├── parser.py

│ ├── ast.py

│ ├── interpreter.py

│ └── repl.py

├── tests/

│ ├── test_lexer.py

│ ├── test_parser.py

│ ├── test_interpreter.py

│ └── test_repl.py

├── examples/

│ └── hello_world.aoop

├── README.md

└── requirements.txtVirtual Environment

Using a virtual environment for Python projects is a really good practice. Here is the following code to setup for antiOOP. Run this in your terminal inside antiOOP repository:

python -m venv antioop-env

source antioop-env/bin/activate # On Windows, use: antioop-env\Scripts\activateDependencies

For now, we'll keep our dependencies minimal. We'll use pytest for testing. Create a requirements.txt file with the following content:

pytest==7.3.1Install the dependencies:

pip install -r requirements.txtInitial Code

Let’s get things started….

We’ll start with a basic structure for our lexer. Create a file src/lexer.py with the following content:

from enum import Enum, auto

class TokenType(Enum):

INTEGER = auto()

FLOAT = auto()

PLUS = auto()

MINUS = auto()

MULTIPLY = auto()

DIVIDE = auto()

LPAREN = auto()

RPAREN = auto()

EOF = auto()

class Token:

def __init__(self, type, value):

self.type = type

self.value = value

def __repr__(self) -> str:

return f"Token({self.type}, {self.value})"

class Lexer:

def __init__(self, text):

self.text = text

self.pos = 0

self.current_char = self.text[self.pos]

def advance(self):

self.pos += 1

if self.pos > len(self.text)-1:

self.current_char = None

else:

self.current_char = self.text[self.pos]

def skip_whitespace(self):

while self.current_char is not None and self.current_char.isspace():

self.advance()

def integer(self) -> int:

result = ''

while self.current_char is not None and self.current_char.isdigit():

result += self.current_char

self.advance()

return int(result)

def get_next_token(self):

while self.current_char is not None:

if self.current_char.isspace():

self.skip_whitespace()

continue

if self.current_char.isdigit():

return Token(TokenType.INTEGER, self.integer())

if self.current_char == '+':

self.advance()

return Token(TokenType.PLUS, '+')

if self.current_char == '-':

self.advance()

return Token(TokenType.MINUS, '-')

if self.current_char == '*':

self.advance()

return Token(TokenType.MULTIPLY, '*')

if self.current_char == '/':

self.advance()

return Token(TokenType.DIVIDE, '/')

if self.current_char == '(':

self.advance()

return Token(TokenType.LPAREN, '(')

if self.current_char == ')':

self.advance()

return Token(TokenType.RPAREN, ')')

raise Exception(f"Invalid character: {self.current_char}")

return Token(TokenType.EOF, None)Btw Appy what is a Lexer!?

A lexer is a program that breaks down source code into a sequence of tokens. It's the first step in parsing code, converting raw text into meaningful units that can be processed by later stages of a compiler or interpreter.

What about tokens!?

A token is the smallest meaningful unit of a programming language, identified by the lexer. It can be a keyword, identifier, literal, operator, or punctuation symbol.

This initial lexer can tokenized basic arithmetic expressions. We’ll expand on this in future posts. Stay tuned….. (subscribe….. plzz…)

Testing

What!? Testing!? Wasn’t this a functional programming tutorial? Yes, boss but testing is as important as developing and this helps us test if our program is working as expected or if some new feature we added isn’t breaking existing features.

Let’s write a simple test for our lexer. Create a file tests/test_lexer.py:

from src.lexer import Lexer, TokenType

def test_lexer_integers():

lexer = Lexer("123 456")

assert lexer.get_next_token().type == TokenType.INTEGER

assert lexer.get_next_token().type == TokenType.INTEGER

assert lexer.get_next_token().type == TokenType.EOF

def test_lexer_operators():

lexer = Lexer("+ - * /")

assert lexer.get_next_token().type == TokenType.PLUS

assert lexer.get_next_token().type == TokenType.MINUS

assert lexer.get_next_token().type == TokenType.MULTIPLY

assert lexer.get_next_token().type == TokenType.DIVIDE

assert lexer.get_next_token().type == TokenType.EOF

def test_lexer_parentheses():

lexer = Lexer("()")

assert lexer.get_next_token().type == TokenType.LPAREN

assert lexer.get_next_token().type == TokenType.RPAREN

assert lexer.get_next_token().type == TokenType.EOF

def test_lexer_complex_expression():

lexer = Lexer("(1 + 2) * 3 - 4 / 5")

tokens = [lexer.get_next_token().type for _ in range(11)] # 10 tokens + EOF

assert tokens == [

TokenType.LPAREN, TokenType.INTEGER, TokenType.PLUS, TokenType.INTEGER, TokenType.RPAREN,

TokenType.MULTIPLY, TokenType.INTEGER, TokenType.MINUS, TokenType.INTEGER,

TokenType.DIVIDE, TokenType.INTEGER

]Ok Ok Ok before running this file run the following command on terminal:

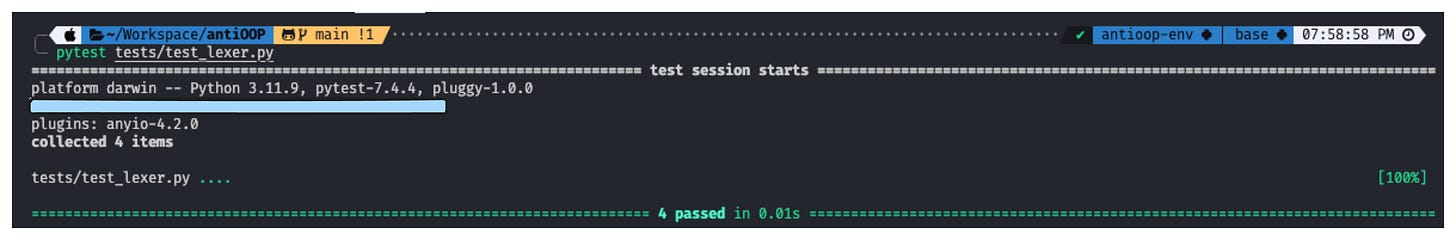

export PYTHONPATH=/path/to/antioop:$PYTHONPATHNow run the test using PyTest:

pytest tests/test_lexer.pyConclusion

We've set up our development environment, created the initial project structure, and implemented a basic lexer with tests. In the next post, we'll dive deeper into designing the core language features of antiOOP.

Stay tuned for more exciting developments in our journey to build a functional programming language!

Would love to connect with you guys: Twitter/X

Part 3 OUT NOW!!!